OpenMP并行编程应用—加速OpenCV图像拼接算法

栏目:php教程时间:2017-02-23 09:04:10

OpenMP是1种利用于多处理器程序设计的并行编程处理方案,它提供了对并行编程的高层抽象,只需要在程序中添加简单的指令,就能够编写高效的并行程序,而不用关心具体的并行实现细节,下降了并行编程的难度和复杂度。也正由于OpenMP的简单易用性,它其实不合适于需要复杂的线程间同步和互斥的场合。

OpenCV中使用Sift或Surf特点进行图象拼接的算法,需要分别对两幅或多幅图象进行特点提取和特点描写,以后再进行图象特点点的配对,图象变换等操作。不同图象的特点提取和描写的工作是全部进程中最耗费时间的,也是独立 运行的,可使用OpenMP进行加速。

以下是不使用OpenMP加速的Sift图象拼接原程序:

#include "highgui/highgui.hpp"

#include "opencv2/nonfree/nonfree.hpp"

#include "opencv2/legacy/legacy.hpp"

#include "omp.h"

using namespace cv;

//计算原始图象点位在经过矩阵变换后在目标图象上对应位置

Point2f getTransformPoint(const Point2f originalPoint, const Mat &transformMaxtri);

int main(int argc, char *argv[])

{

float startTime = omp_get_wtime();

Mat image01 = imread("Test01.jpg");

Mat image02 = imread("Test02.jpg");

imshow("拼接图象1", image01);

imshow("拼接图象2", image02);

//灰度图转换

Mat image1, image2;

cvtColor(image01, image1, CV_RGB2GRAY);

cvtColor(image02, image2, CV_RGB2GRAY);

//提取特点点

SiftFeatureDetector siftDetector(800); // 海塞矩阵阈值

vector<KeyPoint> keyPoint1, keyPoint2;

siftDetector.detect(image1, keyPoint1);

siftDetector.detect(image2, keyPoint2);

//特点点描写,为下边的特点点匹配做准备

SiftDescriptorExtractor siftDescriptor;

Mat imageDesc1, imageDesc2;

siftDescriptor.compute(image1, keyPoint1, imageDesc1);

siftDescriptor.compute(image2, keyPoint2, imageDesc2);

float endTime = omp_get_wtime();

std::cout << "不使用OpenMP加速消耗时间: " << endTime - startTime << std::endl;

//取得匹配特点点,并提取最优配对

FlannBasedMatcher matcher;

vector<DMatch> matchePoints;

matcher.match(imageDesc1, imageDesc2, matchePoints, Mat());

sort(matchePoints.begin(), matchePoints.end()); //特点点排序

//获得排在前N个的最优匹配特点点

vector<Point2f> imagePoints1, imagePoints2;

for (int i = 0; i < 10; i++)

{

imagePoints1.push_back(keyPoint1[matchePoints[i].queryIdx].pt);

imagePoints2.push_back(keyPoint2[matchePoints[i].trainIdx].pt);

}

//获得图象1到图象2的投影映照矩阵,尺寸为3*3

Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC);

Mat adjustMat = (Mat_<double>(3, 3) << 1.0, 0, image01.cols, 0, 1.0, 0, 0, 0, 1.0);

Mat adjustHomo = adjustMat*homo;

//获得最强配对点在原始图象和矩阵变换后图象上的对应位置,用于图象拼接点的定位

Point2f originalLinkPoint, targetLinkPoint, basedImagePoint;

originalLinkPoint = keyPoint1[matchePoints[0].queryIdx].pt;

targetLinkPoint = getTransformPoint(originalLinkPoint, adjustHomo);

basedImagePoint = keyPoint2[matchePoints[0].trainIdx].pt;

//图象配准

Mat imageTransform1;

warpPerspective(image01, imageTransform1, adjustMat*homo, Size(image02.cols + image01.cols + 110, image02.rows));

//在最强匹配点左边的堆叠区域进行累加,是衔接稳定过渡,消除突变

Mat image1Overlap, image2Overlap; //图1和图2的堆叠部份

image1Overlap = imageTransform1(Rect(Point(targetLinkPoint.x - basedImagePoint.x, 0), Point(targetLinkPoint.x, image02.rows)));

image2Overlap = image02(Rect(0, 0, image1Overlap.cols, image1Overlap.rows));

Mat image1ROICopy = image1Overlap.clone(); //复制1份图1的堆叠部份

for (int i = 0; i < image1Overlap.rows; i++)

{

for (int j = 0; j < image1Overlap.cols; j++)

{

double weight;

weight = (double)j / image1Overlap.cols; //随距离改变而改变的叠加系数

image1Overlap.at<Vec3b>(i, j)[0] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[0] + weight*image2Overlap.at<Vec3b>(i, j)[0];

image1Overlap.at<Vec3b>(i, j)[1] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[1] + weight*image2Overlap.at<Vec3b>(i, j)[1];

image1Overlap.at<Vec3b>(i, j)[2] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[2] + weight*image2Overlap.at<Vec3b>(i, j)[2];

}

}

Mat ROIMat = image02(Rect(Point(image1Overlap.cols, 0), Point(image02.cols, image02.rows))); //图2中不重合的部份

ROIMat.copyTo(Mat(imageTransform1, Rect(targetLinkPoint.x, 0, ROIMat.cols, image02.rows))); //不重合的部份直接衔接上去

namedWindow("拼接结果", 0);

imshow("拼接结果", imageTransform1);

imwrite("D:\\拼接结果.jpg", imageTransform1);

waitKey();

return 0;

}

//计算原始图象点位在经过矩阵变换后在目标图象上对应位置

Point2f getTransformPoint(const Point2f originalPoint, const Mat &transformMaxtri)

{

Mat originelP, targetP;

originelP = (Mat_<double>(3, 1) << originalPoint.x, originalPoint.y, 1.0);

targetP = transformMaxtri*originelP;

float x = targetP.at<double>(0, 0) / targetP.at<double>(2, 0);

float y = targetP.at<double>(1, 0) / targetP.at<double>(2, 0);

return Point2f(x, y);

}

图象1:

图象2:

拼接结果 :

在我的机器上不使用OpenMP平均耗时 4.7S。

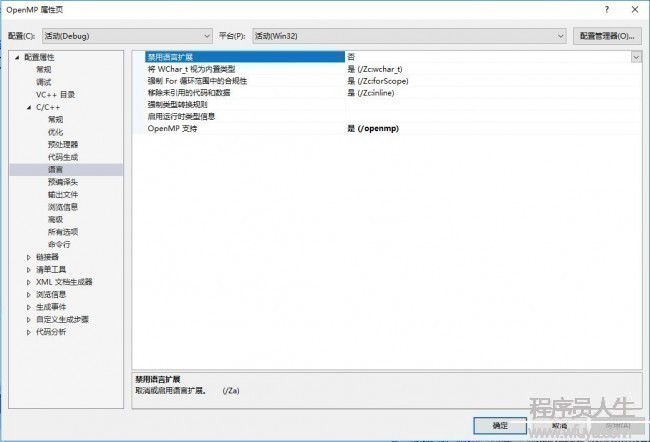

使用OpenMP也很简单,VS 内置了对OpenMP的支持。在项目上右键->属性->配置属性->C/C++->语言->OpenMP支持里选择是:

以后在程序中加入OpenMP的头文件“omp.h”就能够了:

#include "highgui/highgui.hpp"

#include "opencv2/nonfree/nonfree.hpp"

#include "opencv2/legacy/legacy.hpp"

#include "omp.h"

using namespace cv;

//计算原始图象点位在经过矩阵变换后在目标图象上对应位置

Point2f getTransformPoint(const Point2f originalPoint, const Mat &transformMaxtri);

int main(int argc, char *argv[])

{

float startTime = omp_get_wtime();

Mat image01, image02;

Mat image1, image2;

vector<KeyPoint> keyPoint1, keyPoint2;

Mat imageDesc1, imageDesc2;

SiftFeatureDetector siftDetector(800); // 海塞矩阵阈值

SiftDescriptorExtractor siftDescriptor;

//使用OpenMP的sections制导指令开启多线程

#pragma omp parallel sections

{

#pragma omp section

{

image01 = imread("Test01.jpg");

imshow("拼接图象1", image01);

//灰度图转换

cvtColor(image01, image1, CV_RGB2GRAY);

//提取特点点

siftDetector.detect(image1, keyPoint1);

//特点点描写,为下边的特点点匹配做准备

siftDescriptor.compute(image1, keyPoint1, imageDesc1);

}

#pragma omp section

{

image02 = imread("Test02.jpg");

imshow("拼接图象2", image02);

cvtColor(image02, image2, CV_RGB2GRAY);

siftDetector.detect(image2, keyPoint2);

siftDescriptor.compute(image2, keyPoint2, imageDesc2);

}

}

float endTime = omp_get_wtime();

std::cout << "使用OpenMP加速消耗时间: " << endTime - startTime << std::endl;

//取得匹配特点点,并提取最优配对

FlannBasedMatcher matcher;

vector<DMatch> matchePoints;

matcher.match(imageDesc1, imageDesc2, matchePoints, Mat());

sort(matchePoints.begin(), matchePoints.end()); //特点点排序

//获得排在前N个的最优匹配特点点

vector<Point2f> imagePoints1, imagePoints2;

for (int i = 0; i < 10; i++)

{

imagePoints1.push_back(keyPoint1[matchePoints[i].queryIdx].pt);

imagePoints2.push_back(keyPoint2[matchePoints[i].trainIdx].pt);

}

//获得图象1到图象2的投影映照矩阵,尺寸为3*3

Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC);

Mat adjustMat = (Mat_<double>(3, 3) << 1.0, 0, image01.cols, 0, 1.0, 0, 0, 0, 1.0);

Mat adjustHomo = adjustMat*homo;

//获得最强配对点在原始图象和矩阵变换后图象上的对应位置,用于图象拼接点的定位

Point2f originalLinkPoint, targetLinkPoint, basedImagePoint;

originalLinkPoint = keyPoint1[matchePoints[0].queryIdx].pt;

targetLinkPoint = getTransformPoint(originalLinkPoint, adjustHomo);

basedImagePoint = keyPoint2[matchePoints[0].trainIdx].pt;

//图象配准

Mat imageTransform1;

warpPerspective(image01, imageTransform1, adjustMat*homo, Size(image02.cols + image01.cols + 110, image02.rows));

//在最强匹配点左边的堆叠区域进行累加,是衔接稳定过渡,消除突变

Mat image1Overlap, image2Overlap; //图1和图2的堆叠部份

image1Overlap = imageTransform1(Rect(Point(targetLinkPoint.x - basedImagePoint.x, 0), Point(targetLinkPoint.x, image02.rows)));

image2Overlap = image02(Rect(0, 0, image1Overlap.cols, image1Overlap.rows));

Mat image1ROICopy = image1Overlap.clone(); //复制1份图1的堆叠部份

for (int i = 0; i < image1Overlap.rows; i++)

{

for (int j = 0; j < image1Overlap.cols; j++)

{

double weight;

weight = (double)j / image1Overlap.cols; //随距离改变而改变的叠加系数

image1Overlap.at<Vec3b>(i, j)[0] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[0] + weight*image2Overlap.at<Vec3b>(i, j)[0];

image1Overlap.at<Vec3b>(i, j)[1] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[1] + weight*image2Overlap.at<Vec3b>(i, j)[1];

image1Overlap.at<Vec3b>(i, j)[2] = (1 - weight)*image1ROICopy.at<Vec3b>(i, j)[2] + weight*image2Overlap.at<Vec3b>(i, j)[2];

}

}

Mat ROIMat = image02(Rect(Point(image1Overlap.cols, 0), Point(image02.cols, image02.rows))); //图2中不重合的部份

ROIMat.copyTo(Mat(imageTransform1, Rect(targetLinkPoint.x, 0, ROIMat.cols, image02.rows))); //不重合的部份直接衔接上去

namedWindow("拼接结果", 0);

imshow("拼接结果", imageTransform1);

imwrite("D:\\拼接结果.jpg", imageTransform1);

waitKey();

return 0;

}

//计算原始图象点位在经过矩阵变换后在目标图象上对应位置

Point2f getTransformPoint(const Point2f originalPoint, const Mat &transformMaxtri)

{

Mat originelP, targetP;

originelP = (Mat_<double>(3, 1) << originalPoint.x, originalPoint.y, 1.0);

targetP = transformMaxtri*originelP;

float x = targetP.at<double>(0, 0) / targetP.at<double>(2, 0);

float y = targetP.at<double>(1, 0) / targetP.at<double>(2, 0);

return Point2f(x, y);

}

OpenMP中for制导指令用于迭代计算的任务分配,sections制导指令用于非迭代计算的任务分配,每一个#pragma omp section 语句会引导1个线程。在上边的程序中相当因而两个线程分别履行两幅图象的特点提取和描写操作。使用OpenMP后平均耗时2.5S,速度差不多提升了1倍。

------分隔线----------------------------

------分隔线----------------------------