Hadoop Yarn(一)―― 单机伪分布式环境安装

栏目:互联网时间:2014-11-13 09:07:00

HamaWhite(QQ:530422429)原创作品,转载请注明出处:http://write.blog.csdn.net/postedit/40556267。

本文是根据Hadoop官网安装教程写的Hadoop YARN在单机伪散布式环境下的安装报告,仅供参考。

系统:Ubuntu14.04

Hadoop版本:hadoop⑵.5.0

Java版本:openjdk⑴.7.0_55

2. 下载Hadoop⑵.5.0,http://mirrors.cnnic.cn/apache/hadoop/common/hadoop⑵.5.0/hadoop⑵.5.0.tar.gz

本文的$HADOOP_HOME为:/home/baisong/hadoop⑵.5.0(用户名为baisong)。

在 ~/.bashrc文件中添加环境变量,以下:

export HADOOP_HOME=/home/baisong/hadoop⑵.5.0

然后编译,命令以下:

$ source ~/.bashrc

3. 安装JDK,并设置JAVA_HOME环境变量。在/etc/profile文件最后添加以下内容

export JAVA_HOME=/usr/lib/jvm/java⑺-openjdk-i386 //根据自己Java安装目录而定

export PATH=$JAVA_HOME/bin:$PATH

输入以下命令使配置生效

$ source /etc/profile

4. 配置SSH。首先生成秘钥,命令以下,然后1路回车确认,不需要任何输入。

$ ssh-keygen -t rsa 然后把公钥写入authorized_keys文件中,命令以下:

$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

最后,输入下述命令,按提示输入 yes 便可。

$ ssh localhost

5. 修改Hadoop配置文件,进入${HADOOP_HOME}/etc/hadoop/目录。

1)设置环境变量,hadoop-env.sh中添加Java安装目录,以下:

export JAVA_HOME=/usr/lib/jvm/java⑺-openjdk-i386

2)修改core-site.xml,添加以下内容。

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/baisong/hadooptmp</value>

</property>

注:hadoop.tmp.dir项可选(上述设置需手动创建hadooptmp文件夹)。

3)修改hdfs-site.xml,添加以下内容“。

<property>

<name>dfs.repliacation</name>

<value>1</value>

</property>

4)将mapred-site.xml.template重命名为mapred-site.xml,并添加以下内容。

$ mv mapred-site.xml.template mapred-site.xml //重命名

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

5)修改yarn-site.xml,添加以下内容。

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

6. 格式化HDFS,命令以下:

bin/hdfs namenode -format 注释:bin/hadoop namenode -format命令已过时

格式化成功会在/home/baisong/hadooptmp创建dfs文件夹。

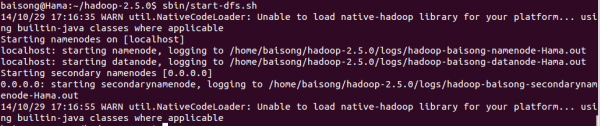

7.启动HDFS,命令以下:

$ sbin/start-dfs.sh

遇到以下毛病:

14/10/29 16:49:01 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting namenodes on [OpenJDK Server VM warning: You have loaded library /home/baisong/hadoop⑵.5.0/lib/native/libhadoop.so.1.0.0

which might have disabled stack guard. The VM will try to fix the stack guard now.

It's highly recommended that you fix the library with 'execstack -c <libfile>', or link it with '-z noexecstack'.

localhost]

sed: -e expression #1, char 6: unknown option to `s'

VM: ssh: Could not resolve hostname vm: Name or service not known

library: ssh: Could not resolve hostname library: Name or service not known

have: ssh: Could not resolve hostname have: Name or service not known

which: ssh: Could not resolve hostname which: Name or service not known

might: ssh: Could not resolve hostname might: Name or service not known

warning:: ssh: Could not resolve hostname warning:: Name or service not known

loaded: ssh: Could not resolve hostname loaded: Name or service not known

have: ssh: Could not resolve hostname have: Name or service not known

Server: ssh: Could not resolve hostname server: Name or service not known

分析缘由知,没有设置 HADOOP_COMMON_LIB_NATIVE_DIR和HADOOP_OPTS环境变量,在 ~/.bashrc文件中添加以下内容并编译。 export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

$ source ~/.bashrc

重新启动HDFS,输出以下,表示启动成功。

可以用过Web界面来查看NameNode运行状态,URL为 http://localhost:50070

停止HDFS的命令为:

$ sbin/stop-dfs.sh

8. 启动YARN,命令以下:

$ sbin/start-yarn.sh

可以用过Web界面来查看NameNode运行状态,URL为 http://localhost:8088

停止HDFS的命令为:

$ sbin/stop-yarn.sh

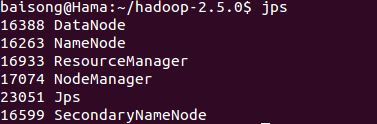

上述HDFS和YARN启动完成后,可通过jps命令查看是不是启动成功。

9. 运行测试程序。

1)测试计算PI,命令以下:

$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples⑵.5.0.jar pi 20 10

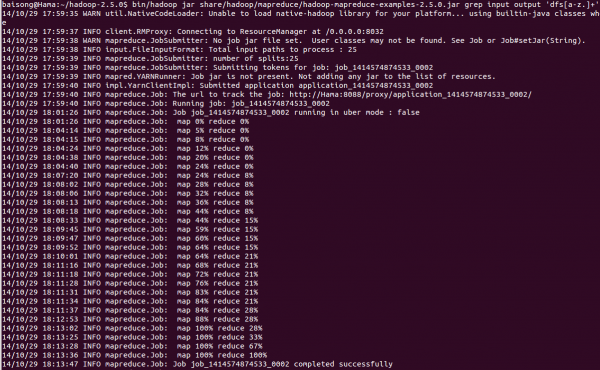

2)测试 grep,首先需要上传输入文件到HDFS上,命令以下:

$ bin/hdfs dfs -put etc/hadoop input

运行grep程序,命令以下:

$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples⑵.5.0.jar grep input output 'dfs[a-z.]+'

运行结果输出以下:

10. 添加环境变量,方便使用start-dfs.sh、start-yarn.sh等命令(可选)。

在 ~/.bashrc文件中添加环境变量,以下:

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

然后编译,命令以下:

$ source ~/.bashrc

下图是 ~/.bashrc文件中添加的变量,以便参考。

------分隔线----------------------------

------分隔线----------------------------